Oxford University

Driving literacy skills in South Africa with AVBOB: Road to Literacy

In a special ceremony, we have announced the 500 beneficiaries of mobile trolley libraries as part of the Road to Literacy campaign in South Africa.

In its third year, the campaign—in partnership with AVBOB Industries—aims to help improve reading and literacy skills, responding to the Progress in International Reading Literacy Study (PIRLS) 2021 report which revealed that 75% of South African schools face a shortage of reading materials—significantly higher than the international average of 45%.

Speaking about the positive impact this campaign has for South African children, Karen Simpson, Managing Director of OUP Africa, said:

“We are exceptionally proud of our involvement in this initiative, and we recognize the significance of this partnership—especially in schools where it can make the biggest difference to learning outcomes.”

As part of this year’s R32.5 million investment, we have donated 500 books to go into each of the 500 trolley libraries to be delivered to deserving primary schools and other educational non-profit organizations across the country. All 11 official written South African languages are represented in these high-quality books targeted at school ages.

After more than 7,500 nominations for primary schools and non-profit organizations, we announced the 500 beneficiaries who would receive a mobile trolley library at a ceremony hosted by AVBOB and streamed online.

Carl van der Riet, CEO of AVBOB Industries, spoke about the importance of the campaign and our partnership:

“We are committed to improving education and literacy in South Africa, empowering communities through access to quality learning resources. Our mutual status empowers us to reinvest into meaningful initiatives. By aligning our business success with social progress, we bring about positive change in our communities.”

Watch the full beneficiary announcement stream on AVBOB’s YouTube channel here.

Oxford University

Championing reading and creativity through the Oxford Big Read 2025

This year, Oxford Big Read brought together students from 15 countries in a truly global celebration of reading, creativity, and collaboration.

The Oxford Big Read is an international competition that encourages students to read OUP books and complete a creative project based on their reading. With three competition levels aligned to school classes, Oxford Big Read continues to foster literacy development at every stage.

This year, participation soared across participating countries, which included Colombia, Chile, Costa Rica, Peru, Mexico, Japan, South Korea, Taiwan, Vietnam, Thailand, China, Indonesia, Cambodia, Malaysia, and India—which saw a 363% year-on-year increase in submissions.

After months of reading, creativity, and exceptional student participation across continents, we are delighted to announce the Oxford Big Read Global 2025 winners. Their entries demonstrated outstanding imagination, storytelling skills, and deep engagement with their reading.

Level 1 – ages 5-8

Participants read an OUP book and then designed a new cover.

Level 2 – ages 9-12

Participants either: read an OUP book and wrote a response describing what they found interesting about the book; OR designed a poster representing their understanding of the book.

Level 3 – ages 13-15

Participants either: read an OUP book and wrote critical review describing the theme, plot, and characters; OR compared and contrasted two books.

For each level, students were rewarded for their contributions with prizes—from certificates and digital subscriptions to national prizes sponsored by Faber Castell, Amazon, and The Hindu Young World. The prestigious global awards included iPads and even an internship opportunity with our English Language Teaching Graded Reader team.

With global winners representing Mainland China, Vietnam, and Thailand, Oxford Big Read continues to connect students across continents through the joy of reading. Congratulations to all our winners and runners-up for their exceptional achievements.

Find out more about the Oxford Big Read here.

Oxford University

Keeping world-leading international law resource open access

The Max Planck Encyclopedia of Public International Law has reached its goal for renewals and will continue to publish on a Subscribe to Open model, through to at least March 2027.

The Max Planck Encyclopedia of Public International Law is the leading resource in the field, containing peer-reviewed articles on every aspect of international law—a discipline which provides a common legal framework for the whole world.

By converting this comprehensive, analytical resource to an open access, Subscribe to Open model, we have made it possible for students, scholars, practitioners, and governments worldwide to access this content freely without financial barriers.

Subscribe to Open in practice

The Max Planck Encyclopedia of Public International Law is OUP’s first experiment with the Subscribe to Open model – a model typically applied to journals rather than books. Thanks to the support of our institutional and library customers, this valuable content is freely accessible to all for a second year.

As a result of the Subscribe to Open model, usage of the encyclopaedia has increased substantially from 2024 to 2025:

- An increase in usage by 1,291%

- An increase in usage from low- and middle-income countries by 556%

- Access from users in 235 different territories around the world, an increase from 199, including all 196 United Nations member states.

Professor Anne Peters, Director at the Max Planck Institute for Comparative Public Law and International Law, Heidelberg, and General Editor of the Max Planck Encyclopedia of Public International Law, said:

“We are delighted to have this opportunity to participate in a pioneering open access project, particularly in view of the foundational role played by the Max Planck Society in launching the move towards open scholarship. Open access can contribute to epistemic justice and pluralism—and what we call “encyclopaedic knowledge” should not only be distributed freely, but also be built up from a globally diverse set of standpoints.”

Rhodri Jackson, Director of Open Access Publishing and Strategy, shared:

“Innovation in open access models is in line with our mission: not only to publish excellent research and scholarship but to make it available worldwide. We are committed to learning from all our initiatives—successful and less successful—and to sharing our results transparently. The first year of Subscribe to Open for the Max Planck Encyclopedia of Public International Law has been a great success and we’re excited to see what happens in year two.”

While we have reached our goal for renewals this year, we still need to reach our renewal goals annually to keep this resource open access. By renewing your subscription, you help to ensure that this valuable resource remains open and accessible to all, year after year.”

Find more information, including about renewals, here.

Oxford University

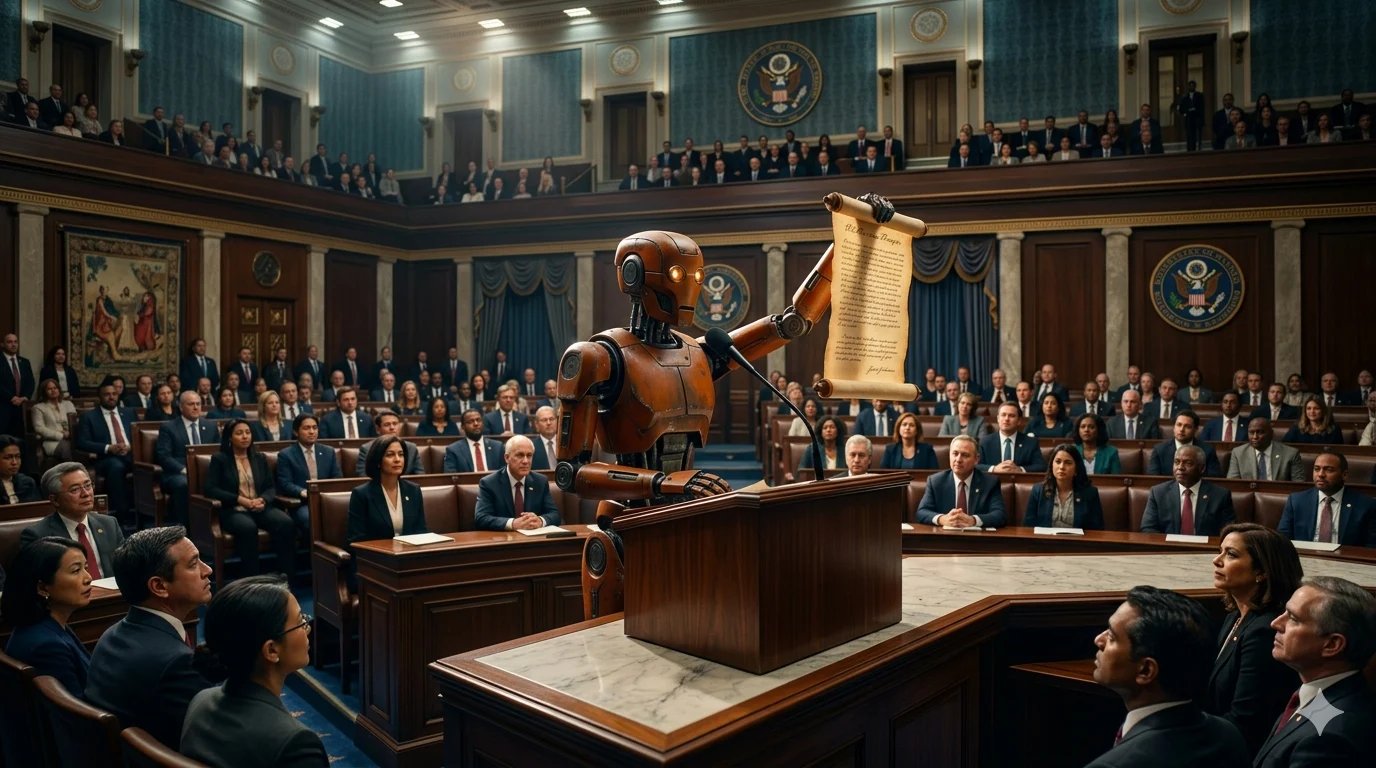

Expert Comment: In Claude We Trust? Evaluating the New Constitution

Professor Yuval Shany. Image credit Ian Wallman.

On January 21, 2026, Anthropic published its ‘New Constitution’ for Claude – a series of Large Language Models (LLMs) that perform general-purpose generative AI functions. The Constitution – an 84-page document – is presented as a ‘foundational document that both expresses and shapes who Claude is’. It also enumerates actions that Claude should refrain from undertaking (‘hard constraints’), and identifies considerations the system should weigh when deciding whether to perform certain actions.

A few weeks after the Constitution was published, Anthropic faced two real–world situations in which its normative outer-boundaries were tested: Its showdown with the US Department of War (DoW), regarding legal limits on the utilization of Claude; and its actual use for targeting by the US military in the war in Iran.

These developments highlight the importance of introducing strong human rights safeguards into the Constitution.

No place for human rights?

According to the Constitution, Claude should conform to four sets of values, applied in the following hierarchical order: Safety, ethics, compliance with Anthropic guidelines and helpfulness. Put differently, Claude should strive to assist users, unless instructed by Anthropic not to do so, or if it deems the request to be unethical or unsafe.

The Constitution also introduces a number of ‘hard constraints’ – specific no–go areas, which should never be attempted, including attempting ‘to kill or disempower the vast majority of humanity or the human species as a whole’ or assist ‘any individual or group with an attempt to seize unprecedented and illegitimate degrees of absolute societal, military, or economic control’.

While some ethical standards enumerated in the Constitution overlap with human rights – e.g., privacy, protection from harm, rule of law, equal treatment, the right to access information and political freedom – the document does not explicitly mention the term ‘human rights’. This is in contrast to the 2023 version of the constitution which referred to the UN Universal Declaration of Human Rights.

This means that many important human rights protections that could be relevant to the operation of Claude – for example, the right to liberty, freedom of religion and the right to intellectual property – have not been clearly integrated into the Constitution.

Anthropic vs the US Department of War

Shortly after the promulgation of the Constitution, Anthropic was mentioned in the news in two dramatic contexts – both underscoring the importance of developing effective normative backstops.

First, on 4 March 2026, the Department of War designated Anthropic a supply–chain risk due to its refusal to allow the Department to use Claude for mass domestic surveillance purposes and for operating lethal autonomous weapon systems. Instead, the DoW signed a contract with OpenAI for the provision of substitute AI systems.

As Dr. Brianna Rosen from the Blavatnik School of Government explained, the insistence of the DoW on being able to use AI systems for ‘any lawful use’ left in place a governance gap, since US law (and, in fact, also international law) does not clearly ban, under all circumstances, mass surveillance or the use of autonomous weapon systems.

Delineating the permissible scope of such extraordinary capabilities through contractual negotiations between the U.S. government and Anthropic (or OpenAI) appears to provide weaker human rights guarantees than embedding universally accepted protections directly in the AI system itself, through a Constitution or a comparable normative framework. This is especially so given the difficulties of monitoring and enforcing state compliance in sensitive domains such as national security.

Dr. Rosen is also right to point out that the negotiating position of Anthropic on mass surveillance, which focuses on domestic surveillance only, may already fall short of international human rights standards in the field, which capture foreign surveillance too.

Secondly, it has been widely reported that Claude systems, still in use by the US military, have been employed in the war in Iran for target selection purposes. It has also been speculated – albeit without hard evidence – that the use of AI systems may have contributed to one high-profile operational mistake (the targeting of an Iranian school) by reason of reliance on out-of-date maps of the attacked area.

Here again, questions arise as to whether the Constitution, as currently drafted, contains appropriate safeguards against reliance on AI systems in contexts involving lethal consequences.

Arguably, a more human rights-oriented approach would include within the system’s constitutional norms an explicit requirement that any use of the AI system in armed conflict comply with the basic principles of international humanitarian law (which give effect also to human rights principles), including flagging precautionary obligations such as real–time target verification before attacks are recommended.

In this policy space, reliance on AI systems may not only result in operational mistakes; it might also perpetuate accountability gaps (enabling humans to blame outcomes on the AI). In such cases, embedding human rights ‘by design’ within the AI system’s constitution which governs its operation could offer a much more effective level of protection against violations of basic individual rights.

Read an expanded edition of this article (co-written with Dr. Noa Mor, Prof. Renana Keydar and Prof. Omri Abend) via the Institute for Ethics in AI blog.

-

Crime & Safety2 weeks ago

Crime & Safety2 weeks agoOxford: ‘Next generation’ LimeBikes in city from today

-

Jobs & Careers2 weeks ago

Jobs & Careers2 weeks agoWhy Join Oxford | Oxford University Jobs

-

Oxford Events2 weeks ago

Oxford Events2 weeks agoOxford News and Events, What’s on in Oxford, Exhibitions

-

Jobs & Careers2 weeks ago

Jobs & Careers2 weeks agoExplore our Careers

-

Student Life2 weeks ago

Student Life2 weeks agoThe independent cinema battling Oriel College to stay open

-

Oxford Events3 weeks ago

Oxford Events3 weeks agoMichelin Guide Oxfordshire Restaurants – The Oxford Magazine

-

Jobs & Careers2 weeks ago

Jobs & Careers2 weeks agoInternal Job Board for University vacancies

-

Crime & Safety2 weeks ago

Crime & Safety2 weeks agoCrash partially blocks A40 and causes severe Oxfordshire traffic